Rethinking Success Beyond Standardized Tests

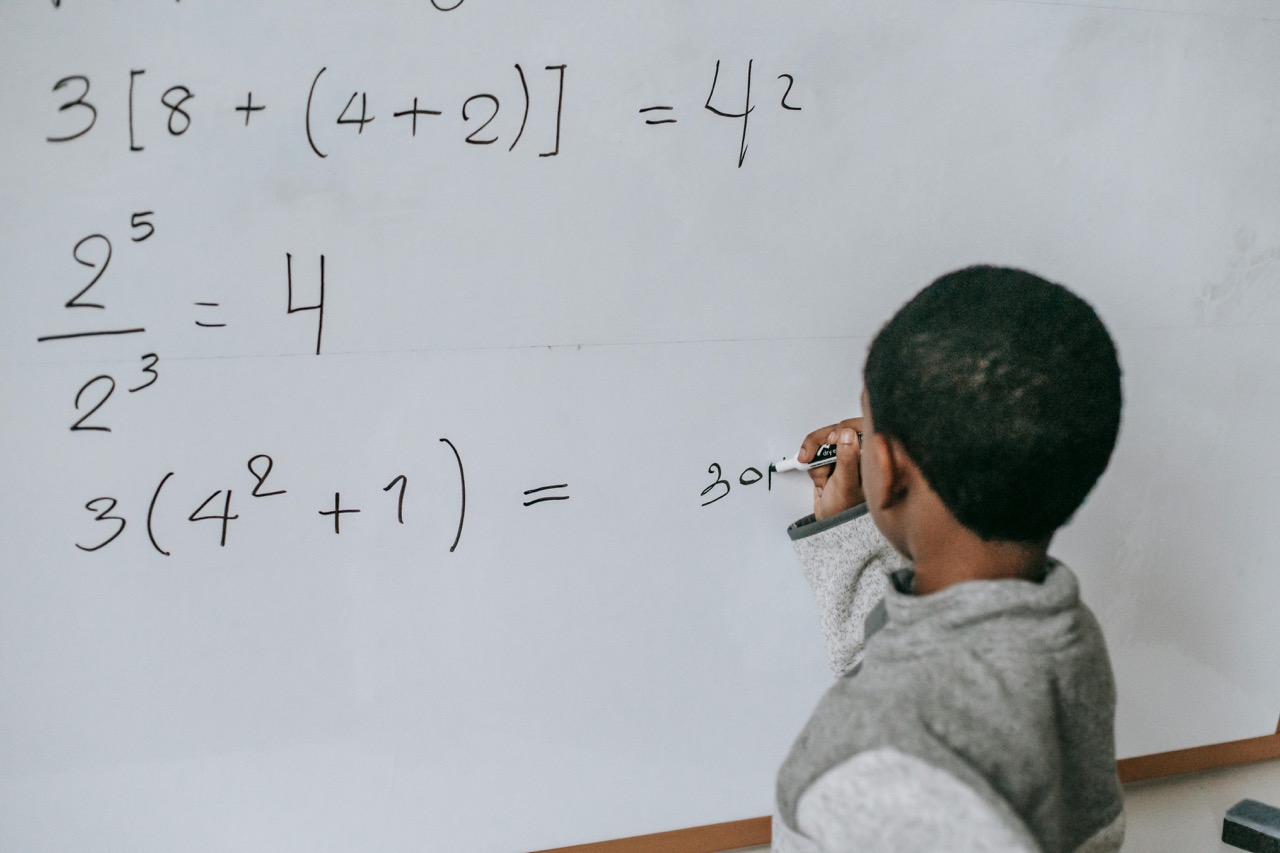

What we measure inevitably shapes what we teach. Traditional standardized tests capture a narrow slice of learning—basic literacy and numeracy—but they overlook the broader outcomes societies now demand: transfer, collaboration, creativity, ethical reasoning, and wellbeing. These dimensions of learning are not peripheral—they are the foundation of human development and civic capacity.

A next-generation measurement system must blend multiple forms of evidence to represent learning that truly matters. This argument applies equally to K–12 schools and higher education. Both sectors face the same challenge: how to measure not just what students know, but how they think, grow, and apply their knowledge to real-world contexts. Whether evaluating a 3rd grader’s reasoning or a university student’s problem-solving in a capstone project, our metrics must evolve to capture meaningful, transferable learning.

Start with Purpose, Not Instruments

Measurement begins with intent. The essential question is not “What can we test?” but “What do we need to know to improve teaching and learning?” Metrics should help educators and policymakers answer pragmatic, value-driven questions:

- Are learners mastering core disciplinary concepts?

- Can they apply them in new and unfamiliar contexts?

- Are they developing habits of mind—curiosity, persistence, and metacognition—that sustain lifelong learning?

- Are systems closing or widening equity gaps?

When purpose leads, design follows. Clear goals ensure that data collection serves learning rather than bureaucracy.

The Portfolio Approach: Multiple Forms of Evidence

A credible and humane assessment ecosystem requires a portfolio of measures, not a single test. Performance tasks and interdisciplinary projects reveal whether learners can analyze, synthesize, and create knowledge. Portfolios show growth and reflection over time—capturing both process and product. Teacher and faculty judgments, calibrated through moderation and shared rubrics, bring professional expertise into accountability systems.

Low-stakes formative checks monitor fluency in foundational skills without distorting instruction. Student voice and wellbeing surveys highlight the conditions that support or hinder learning—belonging, motivation, and emotional safety. In higher education, these principles extend to capstone portfolios, project-based evaluation, and peer-reviewed creative work.

This pluralistic approach aligns assessment with how learning actually unfolds: iteratively, socially, and contextually. It also provides a fairer, more inclusive picture of growth—especially for students whose strengths may not fit traditional test molds.

AI as an Assessment Partner, Not an Arbiter

Artificial intelligence can expand what educators can measure, but it should never replace human judgment. Automated scoring can triage long-form responses, provide initial feedback, and identify patterns for review. Language models can suggest rubrics, extract themes from reflections, or detect inconsistencies across evidence sources. Retrieval-augmented systems can anchor feedback in exemplars and standards, improving accuracy and transparency.

However, human insight remains central. Teachers and professors interpret nuance, exercise empathy, and weigh contextual factors beyond algorithmic reach. The best systems of AI-assisted assessment embed teacher oversight, student agency, and explainable automation at every step.

Whether in a high school science class or a university design studio, AI should scaffold reflection and feedback, not substitute for professional or human interpretation.

Designing for Equity and Accessibility

Assessment design reflects the values of those who create it. To promote fairness, tasks must be culturally responsive and accessible to all learners. Multiple modalities—writing, speaking, coding, performing—allow diverse strengths to shine. Tasks should draw on familiar contexts and examples that connect to students’ lived experiences. Accessibility features such as captions, alt text, flexible timing, and multilingual support must be standard practice.

Disaggregating results by gender, race, language background, and disability status helps identify systemic disparities and target support effectively. In higher education, similar equity considerations apply to grading norms, participation metrics, and internship or research opportunities. Equity, therefore, is not a corrective—it is the design principle itself.

Validity, Reliability, and Continuous Improvement

Good assessment requires both rigor and reflection. Instruments must align with learning progressions, empirical models showing how understanding deepens over time. Piloting assessments across diverse schools and campuses ensures that they measure what they claim to. Moderation and exemplar libraries calibrate scoring across educators and institutions.

Triangulating data from multiple independent sources—teacher feedback, peer review, project work, and reflective writing—strengthens validity. Continuous validation prevents measurement drift and sustains trust. Like good teaching, assessment must be adaptive, transparent, and evidence-driven.

Making Data Meaningful and Actionable

Data is only as useful as the insight it provides. Dashboards for educators and faculty should highlight what matters most: student strengths, misconceptions, and actionable next steps. Learners should have ownership of their assessment data—curating portfolios, tracking their growth, and sharing micro-credentials that represent authentic competencies.

At the system level, institutions should publish privacy-respecting summaries that enable accountability and improvement without ranking or penalizing. When teachers, professors, and students use data collaboratively, assessment becomes a process of reflection and empowerment rather than surveillance.

Making Learning Come Alive Through Curiosity and Creativity

In both K–12 and higher education, assessment should not only evaluate learning—it should also inspire it. When education materials are meaningful and emotionally resonant, students engage with curiosity rather than compliance.

As part of my science communication work, I’ve explored how creative media—like educational songs and visual storytelling—can make complex ideas more approachable for young learners. For instance, in the video below, titled “What is Physics: Introductory Physics Song for Kids,” we use rhythm, melody, and visuals to introduce foundational concepts in an accessible and memorable way.

Such resources demonstrate one approach among many: helping learners connect emotionally and intellectually with content. Videos, interactive experiments, storytelling, and local problem-solving projects all play vital roles in nurturing curiosity, agency, and a lasting love of learning.

Measuring What Truly Matters

The future of educational measurement lies in aligning metrics with the deeper purposes of education itself. When systems value mastery, creativity, transfer, wellbeing, and equity—and when evidence is made practical for educators and meaningful for learners—assessment evolves from a gatekeeper into a guide.

It becomes a mirror that reflects growth, a compass that points toward opportunity, and a bridge connecting learning to life. By reclaiming measurement as a human-centered practice, both schools and universities can ensure that what we measure truly advances what matters most: curiosity, compassion, and the capacity to learn for life.