Higher education's AI response requires systems thinking, not a triage or 'you do you' mindset — which places students last.

As First Responders, Faculty Must Level Up

The emergence of generative AI has shaken higher education to its core, exposing many of the weaknesses caused by decades of underfunding, problematic internal financial models, and external legislative decisions made in a vacuum. These include oversized classes, over-reliance on multiple-choice and other speed-grading shortcuts, product-over-process priorities, and factory-style modularity whereby students and professors often pass through each other’s lives too quickly to remember names.

Such arrangements, and the cultural values driving them, have been subverting optimal teaching and learning since well before ChatGPT’s signal emergence in November 2022. Indeed: they, and the problems they index, set the stage for generative AI’s instant appeal in certain quarters. It is in this context that AI’s threats and promises must be met.

Our experience at San Diego State University (SDSU), one of 22 institutions within the California State University (CSU) system, confirms that the time has come to override a triage mindset toward the pedagogical distress that AI has induced. This approach initially appealed largely because it fit so well with the ideal of academic freedom, in which each instructor is at liberty to adjust the syllabus in keeping with their best professional judgment. Plus, of course, we were all blindsided. But the turn toward individual, assignment-by-assignment, class-by-class adjustment (which in many cases included doing nothing, head in the sand), has students now facing a confused patchwork of often contradictory positions on how, when, and whether to use AI educationally.

We can, and must, do better than this. Imposing systems thinking — designing AI literacy instruction into the curriculum strategically and coherently at the whole-major or whole-program level — is an important part of the solution.

What We’ve Learned from Course-Level Retrofits

Don’t misunderstand: Any guidance on AI in the classroom is helpful. Students — even those who would prefer not to use AI directly — are hungry for AI-related instruction, including regarding responsible use. As one student respondent to a campuswide AI survey launched at SDSU in Fall 2023 put it, “Please just tell us what to do and be clear about it.” The need for clarity intensified as AI’s pervasiveness grew.1

Here, the ‘retrofit’ movement has proven invaluable. Codified by Zach Justus, Director of Faculty Development at CSU Chico, with help from Joshuah Whittinghill and Allison McConnell, the retrofit includes use of a worksheet to identify weak points and plan how to fix them. First, instructors list their student learning outcomes or SLOs; then, they list all assignments stemming from those, and identify any that AI might disrupt. If AI can disrupt an assignment, then the instructor must change either the assignment, or the SLO itself.

The retrofit idea, both as offered by Justus and more generally, seemed to quickly spread throughout the higher education ecosystem, due not least to its overt simplicity but also the true depth of real need. Here at SDSU, our Center for Teaching and Learning has run two retrofit intensives: one in Summer 2025, with funding from the CSU’s AI Education Innovation Challenge (AIEIC) program (DJ Hopkins, PI), and again over Winter Break in early 2026, with internal resourcing. Importantly, participation was voluntary and faculty were compensated. Moreover, rather than trying to squeeze the training in during the academic year it was done when time could be more easily set aside. But most faculty have in fact imposed DIY retrofits on their own, because, as the saying goes, ’needs must.’

Yet, these course-by-course directives, even when clearly communicated, have fallen short. Why? In the words of an SDSU faculty interviewee for Ithaka S+R’s national AI literacy project, in which our campus Library is participating, “[Students] are taking like four to five classes a semester and they have to memorize everyone’s AI policies. They don’t do that [and] they get confused. And then sometimes these instances of academic integrity that we see are not solely students’ mal [i.e., malevolent] kind of intentions. It’s more of like, ‘I just forgot what your policy is because the other [professor] over here has an open policy. [Students] get a lot of mixed messages about what is appropriate’.” At another point this associate professor observed, “Right now it’s sort of all over the place.”

Accordingly, the student respondents for the Ithaka S+R AI literacy project voiced anxiety regarding when and how AI use was appropriate. Although we heard comments like “there’s an AI statement in every syllabus” (this is thanks to a policy requirement put through our Faculty Senate on the back of AI Survey data from 2023 and 2024 showing how confused students were) one student participant in the Ithaka study called these out as “pretty much copy paste language.” Overall, the students lamented the vagueness of most syllabus statements with comments like “I don’t know the lines of what I’m allowed to do and what I’m not allowed to do always” and “I feel like — I feel like it’s not clear enough because I do feel like multiple students would take away something different because what I take away from that would be like, don’t just copy the AI … but even that isn’t well defined in my mind … I have my own definition, but that could very obviously be different from person to person.” Then there was the apparently inconsistent application of the policies within courses in terms of actual assignments or activities. Inconsistencies across courses (both distinct courses as well as the same course taught multiply by different instructors) also caused consternation.2

And students were afraid to seek clarity. Coping strategies included avoiding AI discussions entirely, whether for fear that a professor might assume they wanted to cheat or because AI use does carry a stigma given the ways it has been characterized in relation to education. As one student confided, “My use of AI is kind of something, something kind of shameful for me, not not shameful, but it’s something I’m not proudest of and I try not to use it so I don’t bring it up.”

Findings from our AI survey (which has now drawn responses from more than 135,000 individual participants across more than 30 institutions, including the entire CSU), corroborate this picture. Students do see benefits to learning about AI, one of which is the purported leg up that will give them in the job market: of the 70,000+ CSU students who responded to the survey in Fall 2025, two-thirds (69%) agreed that “AI will become an essential part of most professions.”3 Half (51.3%) also agreed that “Students who use AI for their coursework have an advantage academically” (for campus interview findings regarding these kinds of pressures, see “Cheating or Competing,” 2025).

Notwithstanding a desire for high grades, two-thirds (64.7%) agreed “I am skeptical about the benefits of AI in education”; and one-third (34.7%) went so far as to agree that “AI has negatively affected my learning experience at my university.” Three-quarters (76.5%) agreed that “The ethical use of AI is a major concern for me.”

In this context, it is significant that only one-third (33.2%) agreed that “My professors teach me how to use AI effectively.” That is, two in three don’t get that kind of instruction.

And even when they do, just like the Ithaka interviewees the survey respondents said that what one professor says can be contradicted by the next. We saw this in the qualitative data collected at the end of the survey, analyzed through a combination of manual iterative inspection, AI-assisted querying (for example, using enterprise versions of NotebookLM and AWS Quick Suite), and subject review. For instance, one student said, “It’s confusing having each class have a different set of rules, suggestions, policies, and enforcement around AI use.” Another requested “more clear guidelines and info-sessions for instructors and students. Every department has their own approach and opinion, and even within each department, people can’t make up their minds.” This student, a liberal studies major, went on, “People are afraid to talk about AI as a reality, so when we do talk about it, the conversation is not productive and provides zero actionable insights. While it’s fair for people to have differing opinions on AI, I feel that every department needs to sit down and come up with CLEAR guidelines on how to regard AI in the classroom.”

The Emergency Adjustment Era Is Over

The triage mindset has underwritten highly individuated and sometimes rushed or superficial instructor responses to AI and this, in turn, has led to a communication breakdown, exacerbated by the way that AI has supercharged distrust between teachers and students. The problem created for students on any given campus by the sometimes vastly divergent front-line responses of individual instructors might be alleviated with an institution-wide policy. But disciplines differ so widely that a one-size-fits-all solution won’t work. Plus, top-down approaches rarely find fertile soil within academic organizations committed to shared governance and to the aforementioned spectre of academic freedom. This makes departments or curricular units (programs, majors) a good launch point for much-needed curricular coherence.

Indeed, as the instructor just quoted put it, “I think that one thing my department could do is develop better program and sub-program agreement on what do we want students to get about AI as a degree learning outcome. … The school level is kind of too high to be actionable… But if we were able to at least agree [that] we are the main instructors that these students are going to have and here’s what we’re all going to do… I think the department level would be productive because that influences the degree trajectory most directly.”

ACORN: A By-Faculty-For-Faculty Curricular Adaptation Toolkit

Banner for the SDSU instantiation of the AI-ready curricular options resource network (ACORN) Toolkit course prototype; shown with permission from the ITS division at SDSU.

Banner for the SDSU instantiation of the AI-ready curricular options resource network (ACORN) Toolkit course prototype; shown with permission from the ITS division at SDSU.

The AI-Ready Curricular Options Resource Network or ACORN project emerged in this context. Its name seeks to honor the acorn’s rich indigenous significance in California, home of the CSU, and to reference the nourishing and generative, seed-like potential encapsulated in the Toolkit that the initiative was designed to produce.

Funded through the CSU’s aforementioned AIEIC initiative and led by PI EJ Sobo (Professor, Anthropology; ITS AI Faculty Fellow) and Co-PI Norah Shultz (Professor and Chair, Sociology), the ACORN initiative prioritizes the whole (curriculum) over the part (course). It launched in Summer 2025 with the audacious aim to plan, create, apply, evaluate, refine, and deliver — by the end of Summer 2026 — a scalable toolkit for making comprehensive, consensus-based, data-informed, program-level AI-related curricular changes. By taking a holistic approach that systematically interrogates AI’s place within a given program of study, the Toolkit would help faculty units avoid gaps in coverage of relevant AI tools or literacies, reduce unnecessary redundancies between courses, and otherwise ensure the coherence of their major or program to uplift critical thinking, increase graduates’ workforce readiness, and enhance the student experience overall. We describe the Toolkit’s contents below; but first, we review how it was created.

How the Toolkit Was Built

The ACORN Toolkit was produced by faculty, for faculty, with the student experience held firmly in mind to help ensure that Toolkit use would lead to comprehensive AI-ready programs that foster better student retention and workforce readiness. Largely through biweekly meetings, the effort has entailed building consensus regarding how to approach the question of curriculum redevelopment, designing tools in support of curricular renewal, creating a user-friendly Canvas site to house the tools, and stirring up excitement among colleagues about the Toolkit’s potential for enhancing curricular coherence and thus improving both student experiences and outcomes.

To start, a taskforce comprising eight SDSU College of Arts and Letters (CAL) faculty members was assembled to manage the work. Sobo, who also serves as a campus AI Faculty Fellow under the auspices of the IT division, chose CAL not only because it is her home college but because CAL, with 19 academic departments offering over 85 majors and minors, is both the largest college at SDSU and responsible for over one-third of all instruction on campus, reaching nearly all c.25,000 undergraduates through GE and writing-related offerings. The College also houses a pioneering digital humanities initiative and, it must be said, its faculty are quantitatively, as per the AI Survey, the least welcoming to AI in all of SDSU.

Nomination to the Taskforce required prior use of AI in teaching and demonstrated AI fluency (e.g., as confirmed via completion of the Academic Applications of AI (AAAI) microcredential, available CSU-wide and to the public); affiliation with a high-impact program; commitment to group decision-making concerning curricular innovations; experience in curricular planning; and proven capacity as a respected role model at SDSU. Diversity in career stage, discipline, and leverageable cross-campus connections also were crucial. Members included Alvin Henry (Associate Professor and Chair, Asian American Studies and CAL Careers Hub); Beth Pollard (Professor and Chair, History); Consuelo Salas (Assistant Professor, Rhetoric and Writing); Fernando Bosco (Professor and Chair, Geography); Rob Malouf (Professor, Linguistics); Sam Kobari (Lecturer, Anthropology); and Sobo and Shultz. The Taskforce was supported by Instructional Technology Services (ITS), via an instructional designer (Kristi Collins) and ITS’s AI Student Fellows (Rosabel Ibrahim and Abir Mohamed).

The Toolkit was built in three phases informed by the Plan-Do-Study-Act (PDSA) or Deming-Shewhart Cycle, a foundational Continuous Quality Improvement (CQI) approach emphasizing the need to test and refine changes in an iterative, systematic, data-informed manner. First, in Summer 2025 (Phase One), we met in two day-long ‘boot camp’ sessions to develop expertise regarding: Proven models for curricular design and assessment; Established perspectives on AI literacies; Workforce-related AI needs; Program-relevant data from SDSU’s AI Survey, with training from ITS on how to use SDSU’s AI Survey Dashboard; and dealing with resistance. Guidance was provided by SDSU authorities on such matters: Sarah Tribelhorn (AI Librarian); Stephen Schellenberg (Senate Chair and past Associate Dean, Division of Undergraduate Studies); DJ Hopkins (from our CTL); David Goldberg (Management Information Sciences and PI for the AI Survey work). Wearing his CAL Careers Fellow hat, Taskforce member Alvin Henry provided an overview of workforce-related issues.

In Fall term, we began the Phase Two work of creating tools to support this, which depended first on deciding what tools to create. The list evolved over time. For example, while providing a custom chatbot through which to query program materials seemed at first a good idea, as Fall wore on we realized that most people would, by Fall 2026, be well equipped to devise their own. Plus, portability of such a tool would be stymied by inter-campus infrastructural differences. In the end, we decided that a prompt dictionary would be a better option. Another lesson learned in fall was how hard it might be for Toolkit users to keep their eyes on the whole curriculum. Even Taskforce members sometimes reverted to thinking about lower-level changes or isolated courses. We were reminded to reinforce messaging, within the Toolkit, situating the whole curriculum as the target and reminding users that systems thinking was required, so that results would be programmatic and not piecemeal.

We also began, in Fall, to stress-test certain tools within our own departments or home programs. For example, using the most up-to-date syllabi and curricular maps, some of us applied some of the prompts developed to support figuring out what was going on in terms of AI within the various courses in a given curriculum and to identify strategic spots for implementing high-impact AI-related enhancements meant to ensure that the AI literacies that might be required of program graduates were taught somewhere within the program as a whole. In doing so we identified weak spots in the logic and iteratively upgraded the prompt list. As another example, we communally added to a list of ’tasks AI can do for you’ whereby hesitant faculty members might come to better grasp how using AI for things like resetting the dates in one’s syllabi could lead to significant time savings.

In Spring 2026 (Phase Three), we began formal stress-testing. Over the break, our instructional design specialist stood up a Canvas course based on solid instructional design principles and with an eye to portability. The Taskforce iteratively refined the design during January and February. We then embarked on the real stress testing, both within our departments and beyond, having recruited several others both within CAL and from various colleges across campus to help kick the tires. We also began work on peripherals such as a ‘welcome video’. At the end of the semester, we will review results and, as the final report is prepared, we will further refine the Toolkit based on Phase Three experiences to ensure viability for wider-scale distribution and use.

The PDSA mindset, inclusion of established curricular redesign and AI literacy models, appreciation of the need for faculty-led planning, meaningful student involvement, and insistence on a data-informed approach have been key supports in establishing a viable, scalable set of tools. To these we now turn.

What’s In the Box?

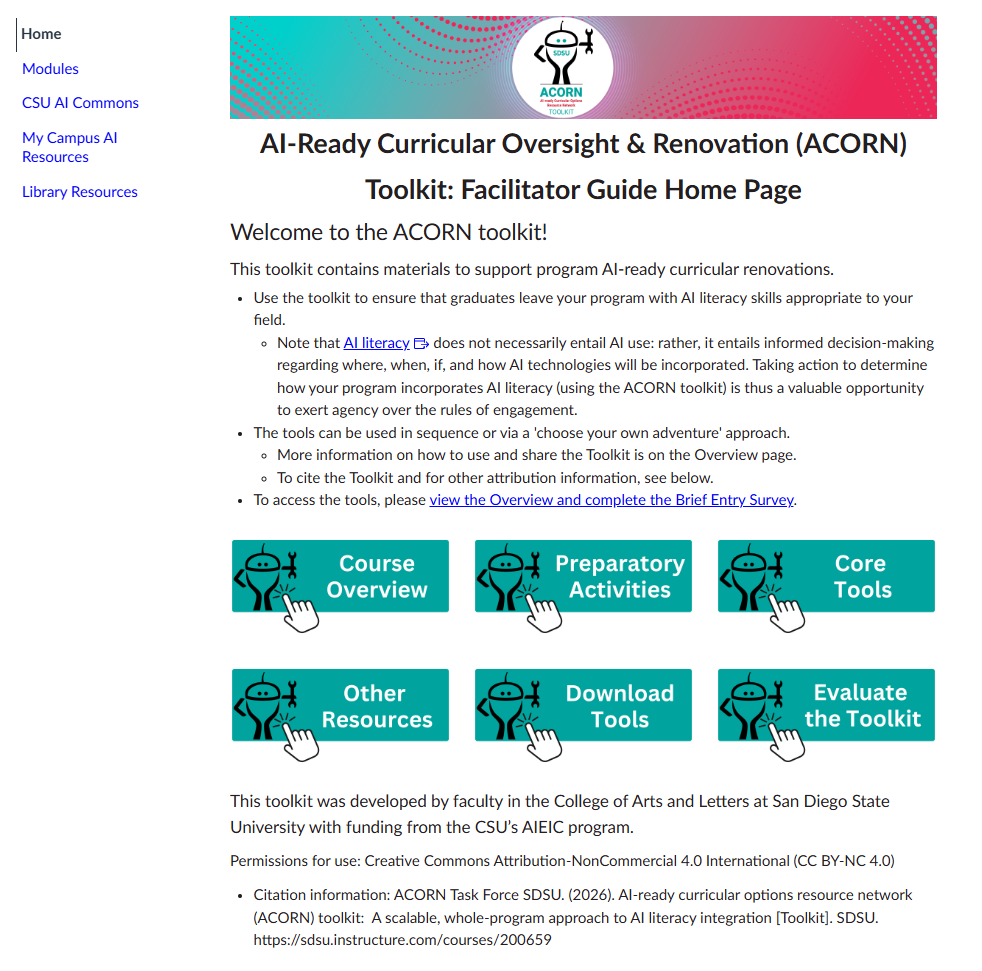

The toolkit is packaged within a Canvas course module (Canvas is a widely-used web-based learning management system or LMS) to support its easy dissemination across not only SDSU but all CSU campuses. Individual faculty members can self-enroll and use the Toolkit as change facilitators. They can download and share, via hard copy or electronically, whichever tools they see fit to apply; or they can share them as one might share any other on-screen offering (i.e., by projecting it; by inviting a peer to look at one’s screen).

The tools are meant to help divisions incorporate AI literacy in a strategic, whole-program way; but, importantly, and as the home page greeting informs new users, literacy does not necessarily entail use. That is, the literacy skills to be woven into a curriculum should be field-appropriate. The toolkit is meant to scaffold the implementation of curricular changes that support informed decision-making regarding where, when, if, and how AI technologies will be incorporated. Toolkit users are thus encouraged to take advantage of what might be a valuable opportunity to exert agency over the rules of engagement with AI. This approach is not only well-anchored in a shared governance model and one that respects expert authority; it also holds promise in terms of increasing the initiative’s appeal for programs where refusalism makes the most sense.

The home page and overview also emphasize that the tools should be used via a ‘choose your own adventure’ approach. Each department or major may be starting at a different point and so tools appropriate for one might be redundant in another.

Prototype of the ACORN Toolkit home page as displayed in the Canvas Learning Management System; produced by Kristi Collins with artwork by Jiong Li. Shown here with permission from the ITS division at SDSU.

Prototype of the ACORN Toolkit home page as displayed in the Canvas Learning Management System; produced by Kristi Collins with artwork by Jiong Li. Shown here with permission from the ITS division at SDSU.

The tools themselves are organized into two modules, which one accesses after taking a brief entry-point survey (to aid in assessment, initially regarding reach and later, in combination with a post-use survey, effectiveness). One module contains tools supporting what are termed ‘preparatory activities’ and the other contains the ‘core’ tools. The preparatory activity tools were designed to help program leaders (faculty facilitators) lay down a strong foundation with individuals and to build some momentum within the larger group that can support dialog and consensus-building, team work, and actual curricular change. They include a list of tasks AI can do for faculty to free up time for more engaging or important work and an AI teaching persona survey. The former can help faculty see the value in handing off certain forms of administrivia or busy-work to AI; the latter can be used for self-reflection.

The preparatory tools, like all the tools created, are meant mainly as conversation starters. Like the AI Survey, the ACORN Toolkit is thus in some ways a ’listening infrastructure’ through which all voices can gain recognition. Here it is worth noting that many faculty still feel the need for a safe space in which to interrogate themselves regarding or think through and articulate their actual stance on AI, or to vent or process grief and other painful feelings and thoughts that AI has brought to the surface. In addition, many departments haven’t ever come together yet to discuss AI, so even if people have had plenty of other conversations about it, conversations at the department or program level must be enabled — and the preparatory tools do just that.

The core tools more directly help in adjusting degree learning outcomes or DLOs so that AI literacy is clearly part of the picture, including a guide to how AI itself might aid in the process. They include a DLO review worksheet, instructions on how to access and query the AI survey data regarding one’s campus or department, including in regard to various demographic subgroups (note that data from fewer than 25 respondents will not be displayed, to protect respondent privacy).

Within the modular structure provided by Canvas, the Toolkit looks like this:

- Module 1: Welcome and Overview (Video introduction and Brief Entry Survey included)

- Module 2: Preparatory Activities

- Preparation Tools

- Share information about Tasks AI can Support (Part 1. List; Part 2. Real life examples)

- Take the Temperature: Conversation Starters to Aid in Discovery

- Discover the Various AI Teaching Personas of Unit Faculty

- Invite a Guest Speaker to Open up Conversation

- Organize a Student-led Focus Group

- Preparation Tools

- Module 3: Whole-curricular 'AI-ready' renovation tools

- Core Renovation Tools

- Review your unit's AI-related profile using the AI Survey Dashboard

- Enumerate AI-related competencies required in the jobs your graduates are being prepared to enter

- Develop Consensus on an AI Literacy Framework for your Program

- Review your Existing DLOs using our DLO Review Process Guide

- Organize and Query your Program Materials to Plot the Course for AI Literacy Integration (use a GPT!)

- Core Renovation Tools

- Other useful tools for change makers

- Downloadable Resources

- Collection of Files Posted in this Course (for electronic or hard-copy sharing)

- Evaluate the Toolkit here

The ‘other useful tools’ area contains a few selected white papers and scholarly articles as well as links to SDSU’s AI Survey Dashboard, SDSU’s AI Microcredential (also now a CSU-wide AI resource and available for free to the public); and the CSU’s AI Commons, which contains tools and information for faculty, students, and staff along with links to campus-specific AI resource hubs. These will come in handy in Fall 2026 when the ACORN Toolkit is made available CSU-wide.

Scaling Across Disciplines — and Campuses

To support scaling up, not only across our campus (as we are doing now, while stress-testing and piloting the tools) but across the CSU, universal design principles were applied. The content’s portability has been ensured, for instance through the use of file types and formats that anyone at any CSU would find easy to access. Further, references to any specific campus will not appear in the shared version.

Even so, piloting is making clear to us that three challenges will remain. These involve faculty’s need for (1) Time to ‘arrive’ (i.e., time to engage in the ’throat clearing’ necessary so that real discussion can begin); (2) Clarity re the Task at Hand (i.e., that the changes needed are at the whole-curricular and not class level, and that AI use is not always necessary for a person to be AI literate); and (3) Time to do the Work (that faculty function within a time desert is unfortunately axiomatic).

Facilitators have the added need for courage: inviting already overtaxed faculty as well as academics whose success has hinged on problem identification to engage in group processes such as shifting a curriculum is risky business. The gambit can pay off in terms of how leaning into the productive friction often created when faculty come together to hash things out can be quite generative. The alignment made possible through use of the ACORN Toolkit carries a secondary benefit: not only does it provide the kind of coherence for which students hunger; it also can boost faculty well-being by enabling participation in a more meaningfully aligned set of courses. Further, given how the ACORN initiative prioritizes faculty ownership of AI literacy plans, the reforms instantiated should be all the more sustainable. Plus, the next time the curriculum needs realignment, the opportunity to make that happen should be easier for faculty to embrace.

Of course all this is yet speculative. We do know that faculty can lead change. Over the past few decades, institution-wide change has been achieved most effectively when faculty take a disciplinary lens to the wicked problem at hand rather than trying to apply a one-size-fits-all solution. This is one reason why we believe that the ACORN toolkit is poised for success. We won’t know whether the ACORN Toolkit takes root, however, until next Fall when it is formally rolled out. In the meantime, we look forward to analyzing results from this pilot test phase and ensuring the lessons learned locally infuse the campus-wide product prior to its dissemination. Meanwhile, if you’d like to be enrolled in the prototype to observe, please be in contact. As with the AI Survey and Micro-credential, SDSU will be more than happy to share this work via our AI resources page in the hopes that by doing so we can optimize outcomes for students, and enhance the experiences they have as they make their way through the higher education system. As inscribed by Chaucer, “Mighty oaks from little acorns grow”; and, as Emerson has informed us, “The creation of a thousand forests is in one acorn.”

About the Authors

Elisa Sobo is a professor of anthropology and AI Faculty Fellow at San Diego State University. A medical anthropologist with over 35 years of professional experience both within healthcare itself and higher education, her work has generally focused on the varied responses of healthcare consumers, and providers, to medical technologies as well as resistance to and refusal of same. Dr. Sobo has published 13 books, including a forthcoming 6th edition of Caring for Patients in Different Cultures and Dynamics of Human Biocultural Diversity (2020), as well as numerous scholarly and public-facing articles. Her work has been covered by major media platforms including the New York Times, Fox News, and NPR. Learn more at anthropology.sdsu.edu/people/sobo. Correspondence: esobo@sdsu.edu

Norah Shultz is Professor of Sociology and Chair of the Department of Sociology at San Diego State University. For the past two decades her scholarly work has been in the sociology of higher education, in topics such as the relationship of multiculturalism and internationalization in global curricula, general education, leading change in higher education, diversity, equity and inclusion and student success. She is the editor of Revising the Curriculum and Co-Curriculum to Engage Diversity, Equity, and Inclusion (2024, Routledge) and Co-Editor of Challenges of Multicultural Education: Teaching and Taking Diversity Courses (2015, Routledge). Correspondence: nshultz@sdsu.edu

The AI-Ready Curricular Options Resource Network (ACORN) Taskforce members include: Fernando Bosco, Professor, Geography; Alvin Henry, Associate Professor, Asian American Studies and CAL Careers Hub; Rosabel Ibrahim, Student, Management and Information Science; Sam Kobari, Lecturer, Anthropology; Rob Malouf, Professor, Linguistics; Elizabeth Pollard, Professor, History; and Consuelo Salas, Assistant Professor, Rhetoric and Writing.

Cite this article

Sobo, E. J., & Shultz, N. (2026). The Case for Coherent, Whole-Program AI Literacy Integration. Society and AI. https://societyandai.org/insights/case-for-coherent-ai-literacy-integration/

Write for Society & AI

Have something to say about AI, education, or society? We would love to hear from you. Society & AI welcomes scholarly contributions from researchers, educators, policymakers, and practitioners — thoughtful, accessible work that advances public understanding of how AI reshapes institutions and human experience. All content is published fully open access. Reach us at submissions@societyandai.org.

-

The CSU’s February 2025 move to make ChatGPT available to all, ostensibly ensuring equitable access to the tool for its 460,000 students and 63,000 faculty and staff members, catalyzed confusion. The arrangement was questioned from many angles but most relevant here was its reading by some as offering students permission to cheat regardless of what individual teachers said. ↩︎

-

The latter problem is being addressed through a sister AIEIC award, this to Kathryn Valentine, Jennifer Burke Reifman, Katie Hughes, and our Rhetoric and Writing Studies (RWS) department, where a key course (RWS 200), focused on composition and critical thinking and required of all students, is being re-designed to include curricular activities focused on critical engagement with and understanding of AI and writing, to be applied by all assigned to teach the course. ↩︎

-

Significantly fewer (57.5%) agree that “AI will play a significant role in my future career.” Whether this represents denial or resistance is a matter of speculation but the quantitative data suggest a significant proportion of students fall in the ‘refusalist’ category, with some very actively seeking majors that will not prepare them for AI-augmented careers. ↩︎