Collaborative intelligence—also sometimes called hybrid intelligence in recent literature—asks a better question than “Will AI replace us?” It asks: How can human and machine intelligence work together to produce insight neither could achieve alone? That is the core of my work at the intersection of AI and human learning: treating AI not as an oracle, but as a partner that extends perception, memory, and analysis while remaining accountable to human values and judgment.

A Partnership Model, Not a Replacement

Collaborative intelligence is not passive consumption of AI outputs, nor is it autonomous systems making high-stakes calls without people. It is teamwork: human judgment setting purpose and boundaries; computational capability exploring options at speed; and explicit handoffs that keep accountability clear.

Where humans excel

- Framing problems in context and setting goals that reflect values

- Interpreting meaning in social, cultural, and ethical frameworks

- Exercising discretion when stakes are high and evidence is ambiguous

Where machines excel

- Searching vast information spaces and retrieving evidence quickly

- Spotting patterns across large, noisy datasets

- Simulating scenarios and handling routine cognitive tasks at scale

The design task is to make these strengths complementary: humans lead on purpose and interpretation; machines widen the search space and surface candidates; humans weigh consequences and decide.

The Propose–Critique–Revise Loop

A simple loop captures how collaboration becomes co-authorship:

- Propose (Machine-assisted). Humans pose a question and constraints; AI proposes options, retrieves sources, or drafts preliminary models.

- Critique (Human-led). Experts test claims against context, ethics, and local knowledge; they reject, refine, or redirect.

- Revise (Together). Prompts, assumptions, and targets are updated; evidence is strengthened; explanations are clarified.

- Repeat until ready. The output reaches a standard fit for action and audit.

This turns Q&A into an iterative dialogue that leaves an inspectable trail—what was asked, what was proposed, what was accepted, and why.

Three Guiding Principles

1) Transparency

People must be able to see sources, assumptions, limitations, and known failure modes. Documentation should answer: What data trained this model? How was it evaluated across groups? What does this specific output cite, and how confident is it?

2) Calibration

Good systems teach appropriate trust. They show uncertainty honestly (confidence intervals, alternative readings, edge cases) and invite human challenge. Overconfidence—by systems or users—is a frequent cause of error.

3) Consent and Credit

When communities contribute data, knowledge, or cultural insight, they deserve acknowledgment and, where appropriate, shared benefits. Governance should respect intellectual property, privacy, and cultural protocols so collaboration remains legitimate and sustainable.

Applications Across Domains

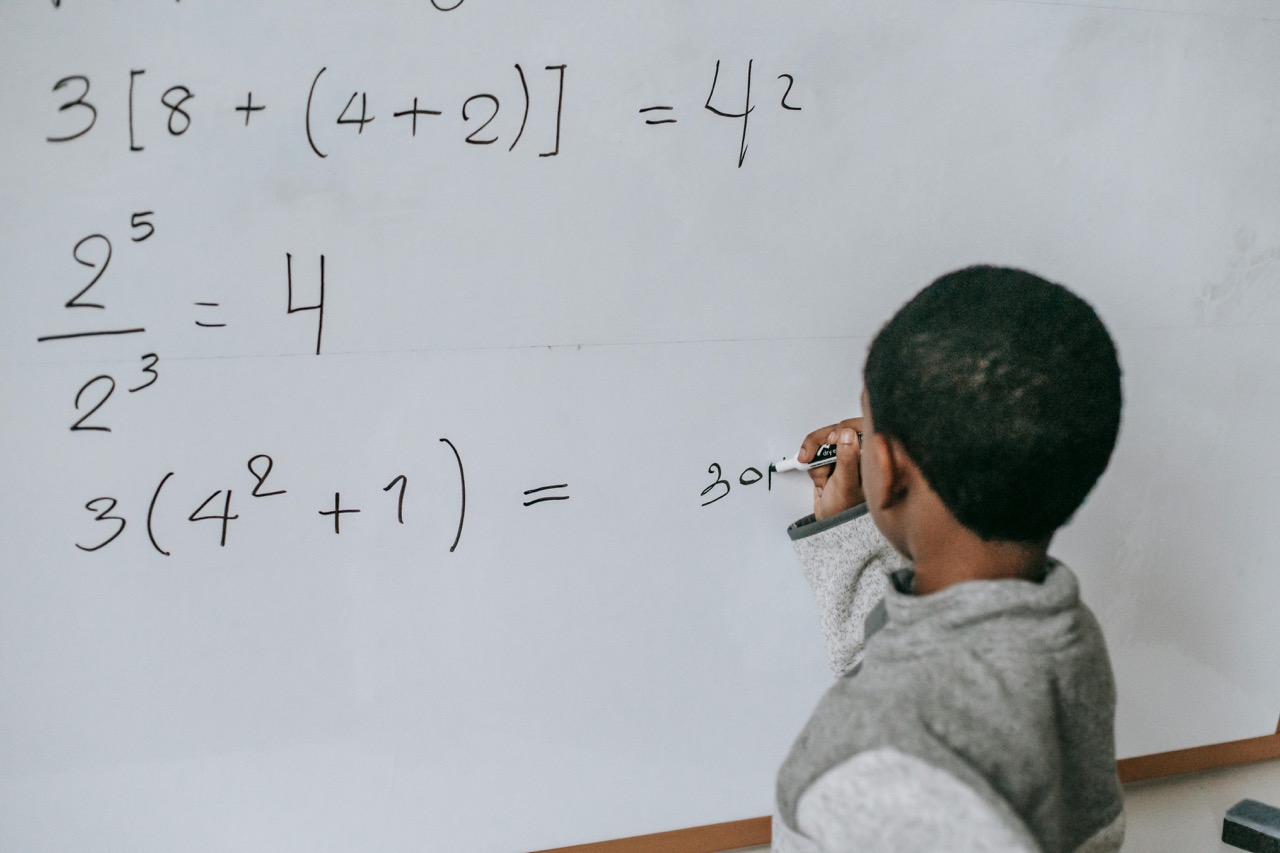

Education. Students co-draft outlines, compare argument structures, or visualize science processes with AI, then strengthen the work through reflection, peer critique, and teacher feedback. The aim is metacognition—learning how to learn with and without machines.

Research and Design. Scientists scan literature and generate hypotheses with AI, then design confirmatory tests. Engineers co-design with generative tools, but human teams own safety cases and trade-off decisions. Clinicians combine patient histories with risk models, keeping human consent and duty of care at the center.

Civic Decision-Making. Communities analyze public comments, surface common concerns, and model policy scenarios with AI support, while human deliberation remains the decision locus. Technology strengthens, not shortcuts, democratic reasoning.

Ensuring Quality and Fairness

Rigor is non-negotiable. In my practice, four routines anchor quality:

- Source grounding. Retrieval-augmented methods tie claims to specific citations; unverifiable outputs do not ship in high-stakes contexts.

- Versioning and documentation. Model cards and data cards record training data, evaluation slices, limitations, and updates.

- Red-teaming. Structured stress tests probe bias, adversarial prompts, edge cases, and misuse scenarios before deployment.

- Diverse benchmarks. Evaluate across contexts and populations; lab-only wins rarely survive real-world diversity.

The Importance of Good Data

Collaboration is only as sound as its data. Useful datasets are relevant, diverse, timely, and ethically sourced. Smaller, well-curated corpora often outperform massive, indiscriminate collections for specialized tasks. Synthetic data can fill gaps, but only with validation that checks for bias amplification and fidelity to real-world distributions.

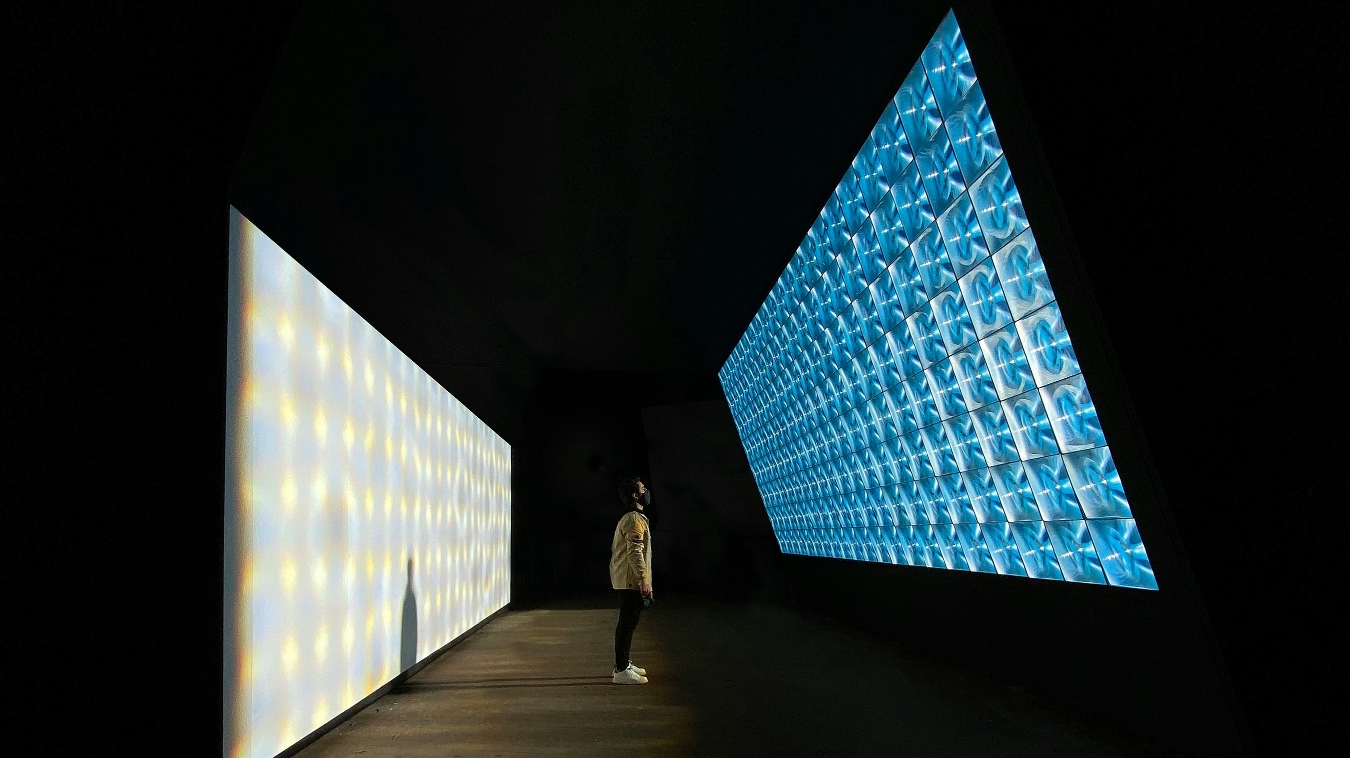

Ergonomic Tools for Collaboration

Interfaces shape outcomes. Effective tools:

- Show citations by default and let readers open sources in place

- Support side-by-side comparisons of options and rationales

- Preserve conversational context across iterations

- Provide guardrails for safety-critical actions and clear rollback paths

- Favor privacy by design (on-device processing where feasible)

- Deliver accessibility (alt text, captions, multilingual support) as defaults

Clarity beats cleverness: if users cannot see why an answer arose, collaboration stalls.

Redefining Work and Learning

As collaboration scales, institutions need new agreements:

- Decision rights. Who approves, who audits, and who is accountable when things go wrong? Governance should match oversight to risk (human-in-the-loop for medium risk; human-at-the-controls for high risk).

- Labor transition. Some tasks will be augmented, others redesigned. Invest in reskilling, portable benefits, and emerging roles—prompt engineers, model stewards, impact evaluators. Treat learning as a continuing public good.

- Culture. Reward the quality of questions and the clarity of reasoning, not only speed. Diverse teams surface different failure modes and opportunities; inclusion is both ethical and epistemic.

Governance for Trust

Trustworthy collaboration depends on public standards and scrutiny:

- Common standards for documentation, testing, privacy, and explainability

- Independent audits and incident reporting for high-risk deployments

- Sandboxing to test in controlled settings before scale

- Open standards and open models in public procurement to enable inspection, adaptation, and local innovation

These are practical mechanisms for legitimacy, not decorative policies.

A Broader Vision

Collaborative (hybrid) intelligence reframes knowledge as a living process: co-produced, revised in use, and accountable to those affected. Value shifts from static outputs to systems that learn with their users. The frontier is not only smarter answers, but wiser arrangements—people, practices, and tools designed to expand human agency rather than outsource it.

A Call to Action: Towards a Civic Project

This is more than a technical pattern; it is a civic commitment. We should build institutions, tools, and habits that honor human dignity while harnessing computational power. Educators can teach inquiry alongside content. Organizations can measure learning alongside output. Policymakers can secure the conditions—governance, transparency, equity—for fair, trustworthy collaboration.

My conviction remains: our most important task is not to build ever-more powerful AI, but to build more thoughtful partnerships—arrangements where humans and machines create knowledge neither could alone, in service of futures aligned with our deepest values.