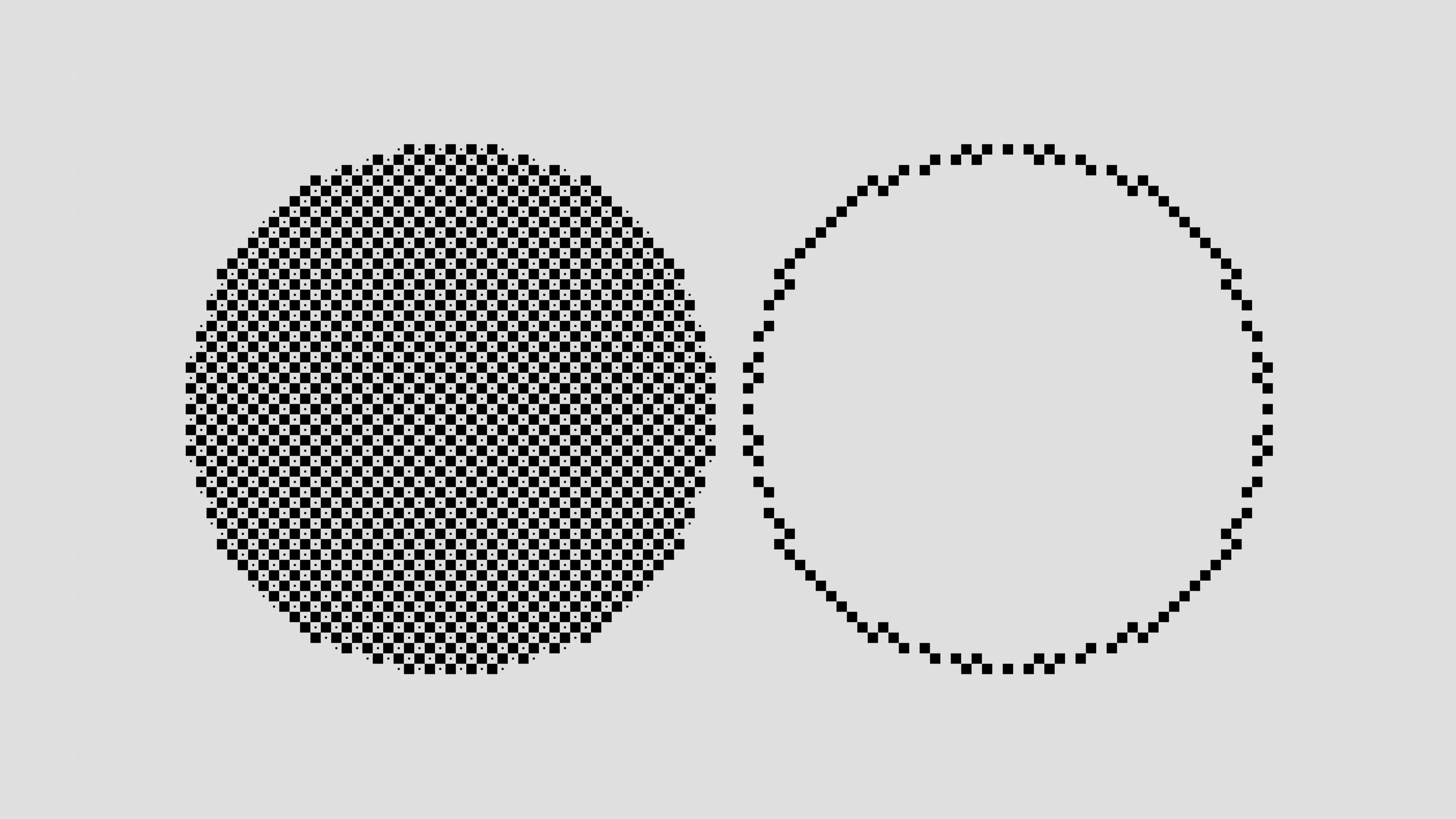

The image above represents two circles—two worlds of knowing.

The left circle, densely layered, mirrors Indigenous Knowledge Systems (IKS): continuous, relational, and intergenerational—wisdom carried through language, practice, ceremony, and responsibility to land.

The right circle, a spare outline, evokes synthetic knowledge—code-driven, scalable, and precise, yet incomplete without meaning, ethics, and context.

Between these circles lies the space of translation. Much of what sustains ecological balance, cultural continuity, and intergenerational learning remains absent from contemporary AI. To design systems that understand the worlds they model, the filled circle—voices, protocols, and sacred practices—should shape how “intelligence” is defined and operationalized. The objective is to move from pattern recognition toward wisdom recognition.

Reframing Ethics for Digital Sovereignty

Responsible engagement with IKS rests on sovereignty, consent, and relational accountability. Prior work on hurricane resilience (Chakravarty & Gattupalli, 2024) demonstrates that traditional weather and ecological knowledge can inform community-based preparedness when communities lead the terms of use.

This article proposes ethical coordinates—co-design, Indigenous data sovereignty, and enforceable benefit-sharing—that determine whether AI strengthens or undermines autonomy.

Understanding Indigenous Knowledge Systems

IKS are place-based epistemologies refined over millennia. They integrate environmental stewardship, healing practices, governance protocols, linguistic structures, and artistic expressions. Knowledge is relational and inseparable from land, community, and ancestry (McGregor, 2004).

In disaster contexts, Indigenous forecasting—cloud formations, wind behavior, and plant cues—often provides early warnings before formal alerts. In this framing, “data” include oral histories, seasonal ceremonies, and embodied ecological literacy alongside sensors and satellites.

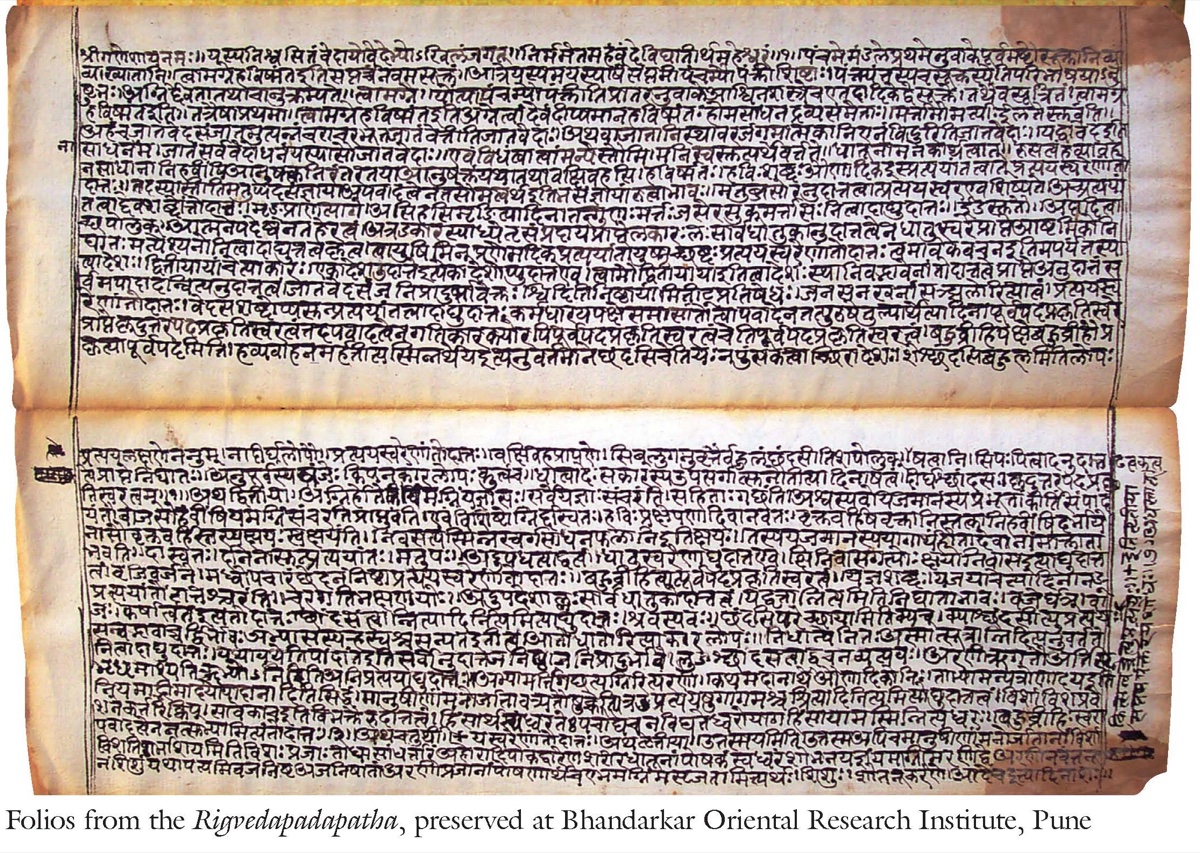

Source: National Mission for Manuscripts — Ministry of Culture, Government of India

The Rigveda manuscripts illustrate a lineage of careful observation anchored in ethics and responsibility. When global AI corpora omit such epistemologies, they encode a partial picture of human cognition. With consent and context, integrating these traditions can recalibrate AI toward deeper cultural inclusivity.

Governance as the Decisive Variable

AI can amplify or erase Indigenous knowledge. The difference is governance—who defines access, authorship, accountability, and the right to refuse.

- Free, Prior and Informed Consent (FPIC): As articulated in the United Nations Declaration on the Rights of Indigenous Peoples (UNDRIP, 2007), consent should be free of coercion, agreed before use, expressed in appropriate languages, and renewed as models evolve.

- Benefit-sharing: The Nagoya Protocol (2014) on genetic resources offers a template: revenue-sharing, co-ownership of IP, and investment in community priorities (language programs, cultural archives, ecological restoration).

- Data sovereignty: Communities determine what is collected, where it is stored, how it circulates, and when it must not be shared. Local Contexts TK Labels encode cultural protocols in metadata. Complementary frameworks include the CARE Principles for Indigenous Data Governance and OCAP® (First Nations principles of Ownership, Control, Access, and Possession).

A practical aspiration is co-stewardship with reciprocity: trace the ancestry of training inputs and route a share of value back to the custodians who sustain them.

From Data to Design: Shared Accountability

Ethics should be implemented as product features and institutional routines.

Operational workflows

- FPIC registers with time-bounded consent, renewal schedules, and role-based approvals (elders, women knowledge-keepers, language custodians, youth councils).

- Protocol-aware APIs that read TK Labels and enforce access tiers (open, seasonal, ceremonial, restricted).

- Data localization and on-prem/edge options so communities can host and govern models locally.

- Model and data cards with explicit consent status, lineage, and restrictions.

Evaluation and reporting

- Community-defined indicators: relevance to local priorities; cultural safety; language vitality; value-return ratio; and governance legitimacy (e.g., consent renewal rates).

- Mixed-method audits: circle dialogues and surveys combined with quantitative checks (fidelity-to-meaning, leakage tests for restricted content, bias metrics).

- Public accountability reports co-authored with community partners.

Repair pathways

- Pre-agreed steps for harm: acknowledgement, takedown or model rollback, retraining with corrected data, and restitution.

Accessibility, Inclusion, and Capacity

Practical equity requires inclusive design:

- Language technology: support for speech-to-text in local languages, community lexicons, and dialectal variation; models fine-tuned with community-approved corpora.

- Connectivity constraints: offline-first and low-bandwidth modes; edge devices for clinics, schools, and cultural centers.

- Disability inclusion: accessible interfaces (screen-reader compatibility, captioning, high-contrast modes) aligned with community norms.

- Capacity building: funded fellowships, apprenticeships for youth, and long-term maintenance plans so communities operate their own pipelines.

Risks and Limitations

- Leakage and inversion: Restricted content may surface via model prompts. Mitigation: TK-aware filters, red-teaming with community testers, and strict evaluation gates before release.

- Governance fatigue: Repeated consent requests can burden councils and elders. Mitigation: batching, renewal windows, compensated time, and clear dashboards.

- Jurisdictional conflicts: National open-data laws may clash with community protocols. Mitigation: data residency controls, MOUs with authorities, and legal counsel.

- Over-digitization: Not all knowledge should be recorded. Mitigation: the legitimate right not to share; seasonal/silent knowledge categories.

- Uneven benefits: Value may pool with vendors. Mitigation: shared IP clauses, revenue floors, and independent audits of benefit flows.

Mini-Cases (Patterns)

- Language Archive Co-Governance (Hypothetical Pattern): A regional archive deploys a speech model trained only on consented recordings; TK Labels prevent export of ceremonial recordings; revenue from API calls funds community teachers and equipment.

- On-Country Climate Adapter (Observed Pattern in Literature): Indigenous seasonal indicators are integrated into flood warnings; a community PI chairs the governance board; benefit-sharing funds wetland restoration.

- Museum Digitization Reset (Increasing Practice): A museum replaces “open by default” with situated openness; sacred images are withdrawn from public endpoints and made viewable only on-prem under cultural supervision.

Pathways Toward Regenerative AI

To align AI with Indigenous sovereignty, prioritize:

- Rights-holder status, not stakeholder status

- Community benefit over data extraction

- Lifecycle control—from scoping to retirement

- Enforceable benefit-sharing and value return

- Local technical capacity for self-governance

When AI internalizes the relational logic of IKS—accountability to land, lineage, and community—it functions as a regenerative tool rather than an extractive engine. The aim is not only ethical AI but culturally intelligent AI—systems that speak many languages: human, ecological, and ancestral, always with consent.

Reflections from Three Perspectives

Education Technology: Translate principles into deployable architecture—FPIC registers, protocol-aware APIs, data localization, and standard governance artifacts (model/data cards, risk registers).

Research Methods and Data: Pair community-defined indicators with mixed-method audits, pre-registered evaluation plans, and public, co-authored accountability reports.

Contrarian Innovation: Treat relationships as covenants, favor situated openness, frame benefit-sharing as repair, and include place-based metrics that center land and language.

Concluding View

Some knowledge should travel, some should travel slowly, and some should not travel at all. Openness serves life when guided by consent, context, and care. Intelligence, in this view, is responsibility made visible. AI can learn to keep good company—with people, places, and time—when governance, design, and measurement align.

Suggested Readings

All links open in a new tab.

- Anderson & Christen (2019). Decolonizing Attribution: Traditions of Exclusion. Journal of Radical Librarianship, 5, 113–152.

- Berkes (2018). Sacred Ecology (4th ed.). Routledge.

- Chakravarty & Gattupalli (2024). Integration of Indigenous Traditional Knowledge and AI in Hurricane Resilience and Adaptation. Springer Nature Switzerland.

- Global Indigenous Data Alliance. CARE Principles.

- First Nations Information Governance Centre. OCAP®.

- McGregor (2004). Coming full circle: Indigenous knowledge, environment, and our future. American Indian Quarterly, 28(3/4), 385–410.

- Walter et al. (2021). Indigenous data sovereignty in the era of big data and open data. Australian Journal of Social Issues, 56(2), 143–156.

- Whyte (2018). Indigenous science (fiction) for the Anthropocene. Environment and Planning E, 1(1–2), 224–242.

This piece forms part of a continuing discussion about the governance of Indigenous Knowledge in AI systems. Reader feedback is welcome at feedback@societyandai.org.