Beyond Human-Centered Design

The dominant paradigm in responsible AI development has been human-centered design: building systems that are usable, accessible, and aligned with individual user needs. This approach, articulated most comprehensively by Ben Shneiderman in his framework for Human-Centered AI, emphasizes reliability, safety, and trustworthiness as core design principles (Shneiderman, 2020). Yet as AI systems increasingly mediate collective decisions—in education, healthcare, housing, criminal justice, and democratic participation—individual usability proves insufficient. The question is no longer simply whether a system works for its users, but whether it serves the communities it affects and the publics it shapes.

Society-centered AI begins with a different premise: the people most affected by a system should help define the problem, shape the solution, and govern its use. Rather than treating communities as data sources or end users, this approach recognizes them as co-designers, co-owners, and co-stewards of technology that touches their lives. The goal is not merely better adoption or improved user experience. It is legitimacy: systems that align with local values, distribute benefits fairly, and remain accountable over time.

Defining Society-Centered AI

Society-centered AI refers to the design, deployment, and governance of artificial intelligence in which affected communities share authority over problem framing, data use, evaluation, and adaptation. It extends the principles of human-centered design into collective, civic, and institutional contexts—embedding public participation throughout the AI lifecycle.

This orientation draws on what Sasha Costanza-Chock describes as “design justice”: the principle that design processes should center the voices of those who are directly impacted by the outcomes, particularly communities that have been historically marginalized by technological systems (Costanza-Chock, 2020). Where conventional AI development asks “How can we build this system responsibly?”, society-centered AI asks “Who has the right to decide what ‘responsible’ means, and how do we ensure they are at the table?”

How the Design Cycle Changes

The design cycle changes when communities lead. Problem framing moves from “How can AI optimize X?” to “What is the human purpose, who is served, and what harms must be avoided?” Requirements gathering expands to include lived experience, cultural practices, and constraints such as bandwidth, cost, language, and accessibility.

Data collection becomes reciprocal rather than extractive, with clear consent, transparent uses, and the option to withdraw. Evaluation focuses not just on accuracy but on whether the system strengthens agency, trust, and opportunity. These commitments align with the principles of Value Sensitive Design, which argues that human values must be accounted for throughout the design process—not as afterthoughts, but as primary design requirements (Friedman & Hendry, 2019).

The Five Phases of Society-Centered AI

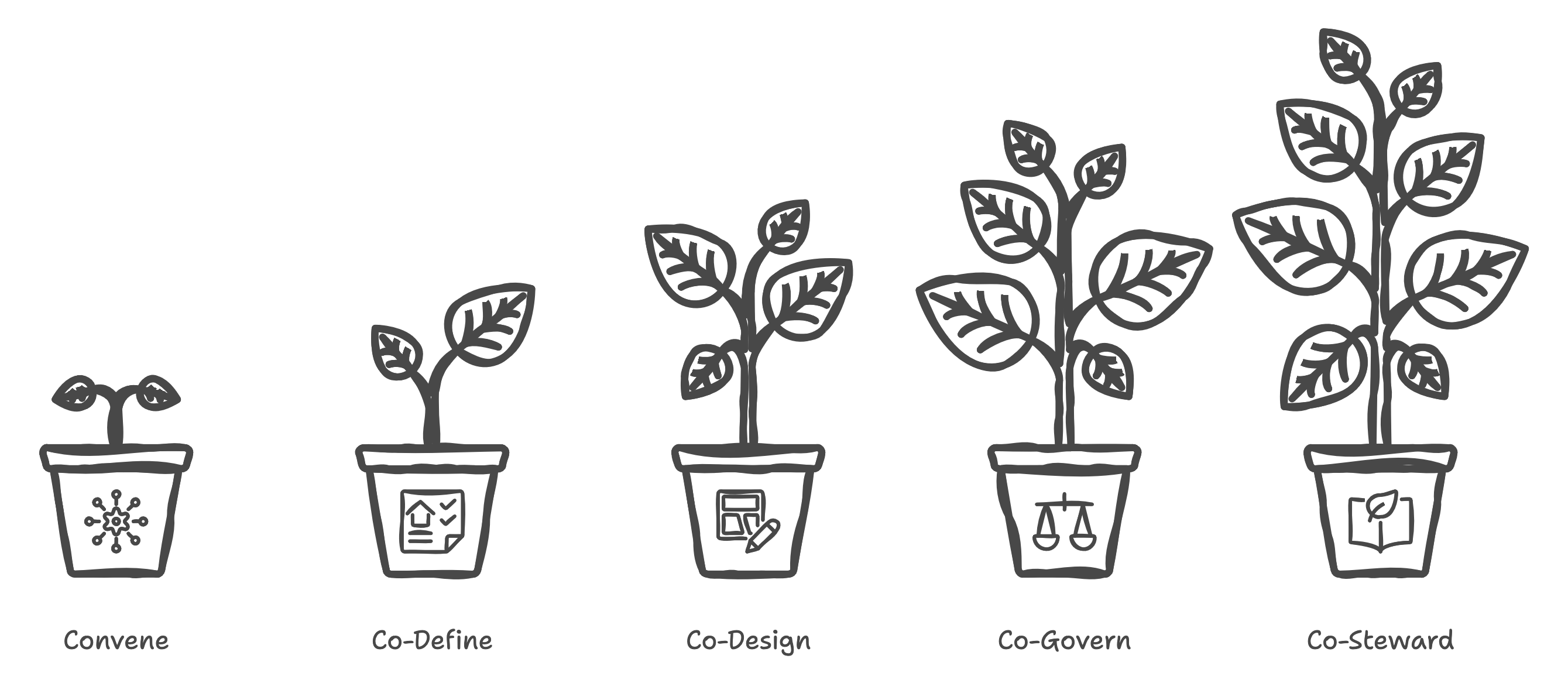

A society-centered process typically follows five phases:

-

Convene a representative coalition that includes community members, domain experts, implementers, and skeptics. Representation matters: those who bear the risks of a system should have voice in its creation.

-

Co-define goals, success metrics, and boundaries using structured methods such as problem trees, stakeholder maps, and risk registers. This phase surfaces disagreements early, when they can still shape design rather than derail deployment.

-

Co-design prototypes that are testable in low-risk settings, capturing qualitative feedback alongside quantitative metrics. Iteration with community input prevents the calcification of early assumptions.

-

Co-govern deployment through participatory policies—eligibility rules, escalation paths, audit rights, and redress mechanisms. Governance is not a one-time approval but an ongoing practice.

-

Co-steward learning, with scheduled reviews that can adjust or retire the system when conditions change. Systems that cannot be questioned cannot be trusted.

The illustration below visualizes these five stages as a continuous, participatory cycle—showing how collective intelligence and civic stewardship guide every phase of responsible AI design.

Society-Centered AI: The Five Phases of Collaborative Design

Operational Principles

The operational principles of society-centered AI are pragmatic, not aspirational. Use plain-language documentation and multilingual materials so participation is real, not symbolic. Publish model cards and data statements that describe sources, limitations, and known risks. Implement grievance channels that respond quickly and track resolution.

Share benefits: if community knowledge improves a model, the community should see tangible returns—funding for local programs, capacity building, or shared intellectual property where appropriate. Finally, measure what communities value, not only what engineers can easily count.

Reshaping Incentives for Builders

This approach reshapes incentives for builders. Product teams are rewarded for reducing harm, not just launching features. Roadmaps include time for community consultation, accessibility reviews, and ethics testing. Procurement favors open standards, modular architectures, and portability, so communities can switch providers without losing their data or rights.

Governance shifts from one-time approvals to continuous oversight, with independent audits and public reporting. As Costanza-Chock argues, design processes that exclude affected communities reproduce existing inequalities; processes that include them create possibilities for transformation (Costanza-Chock, 2020).

A Posture, Not Just a Process

Society-centered AI is also a posture. It requires humility about what models can and cannot do. It asks practitioners to treat lived experience as a form of expertise. It emphasizes reversible choices: default to pilots, minimize irreversible commitments, and design for graceful exits.

When stakes are high—education, health, housing, benefits—humans must remain at the controls for consequential decisions. Automation should support human judgment, not supplant it.

The Deeper Promise

The deeper promise of society-centered AI is cultural: technology becomes a site of civic learning. Communities practice deliberation, weigh trade-offs, and exercise stewardship. Institutions learn to share power and update rules. Engineers learn to translate technical detail without obscuring risk.

Over time, this practice can strengthen the social fabric, because systems are not merely deployed; they are co-authored and co-owned. In this sense, society-centered AI complements but extends human-centered design—embedding collective governance into every phase of the AI lifecycle.

Conclusion

Society-centered AI is not a slogan. It is a discipline. It replaces one-way design with reciprocal making and continuous governance. It treats communities as partners in defining the future they must live with—and ensures AI earns its place by serving that future well.

The traditions it draws upon—participatory design, value sensitive design, design justice, and civic technology—remind us that these questions are not new. What is new is the scale, speed, and opacity of AI systems, which make democratic participation both more difficult and more necessary. Society-centered AI is our answer to that challenge: technology accountable to the people it serves.

References

Costanza-Chock, S. (2020). Design justice: Community-led practices to build the worlds we need. MIT Press. https://doi.org/10.7551/mitpress/12255.001.0001

Friedman, B., & Hendry, D. G. (2019). Value sensitive design: Shaping technology with moral imagination. MIT Press. https://doi.org/10.7551/mitpress/7585.001.0001

Shneiderman, B. (2020). Human-centered AI. Oxford University Press.